SparseStreet

Sparse Gaussian Splatting for Real-Time Street Scene Simulation

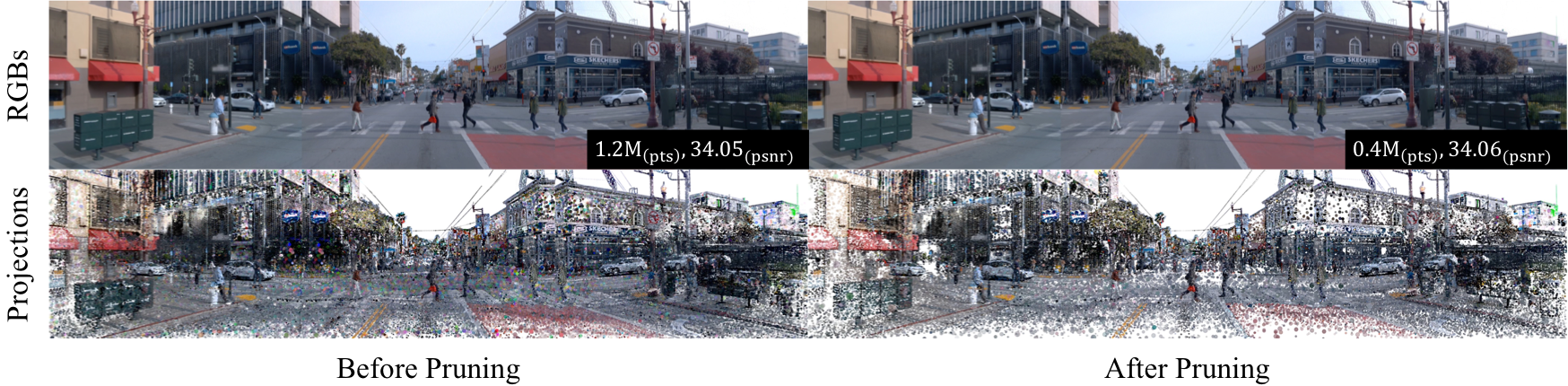

Gaussian projections before and after pruning. SparseStreet effectively removes redundant primitives in background regions while preserving dense representation for dynamic objects (vehicles, pedestrians), enabling real-time rendering without sacrificing visual fidelity.

While 3D Gaussian Splatting has shown promising results in street scene reconstruction, existing methods require massive numbers of Gaussian primitives to capture fine details, leading to prohibitive storage costs and slow rendering speeds. We observe that dynamic objects (e.g., vehicles and pedestrians) demand high-fidelity representations to maintain temporal consistency, while static background regions often contain substantial redundancy. Motivated by this, we propose SparseStreet, a general compression framework specifically designed for street scenes. First, we introduce a node-based learnable pruning strategy that systematically removes low-contributing Gaussian primitives while preserving visually critical regions. Second, after the scene representation stabilizes, we apply background compression, further reducing redundancy in static regions. Our method effectively preserves the geometry and appearance of dynamic objects while significantly reducing the total number of Gaussian primitives. Extensive experiments on the Waymo and nuScenes datasets demonstrate that SparseStreet achieves up to 80% compression ratio with minimal quality degradation, enabling resource-efficient, high-fidelity dynamic scene reconstruction.

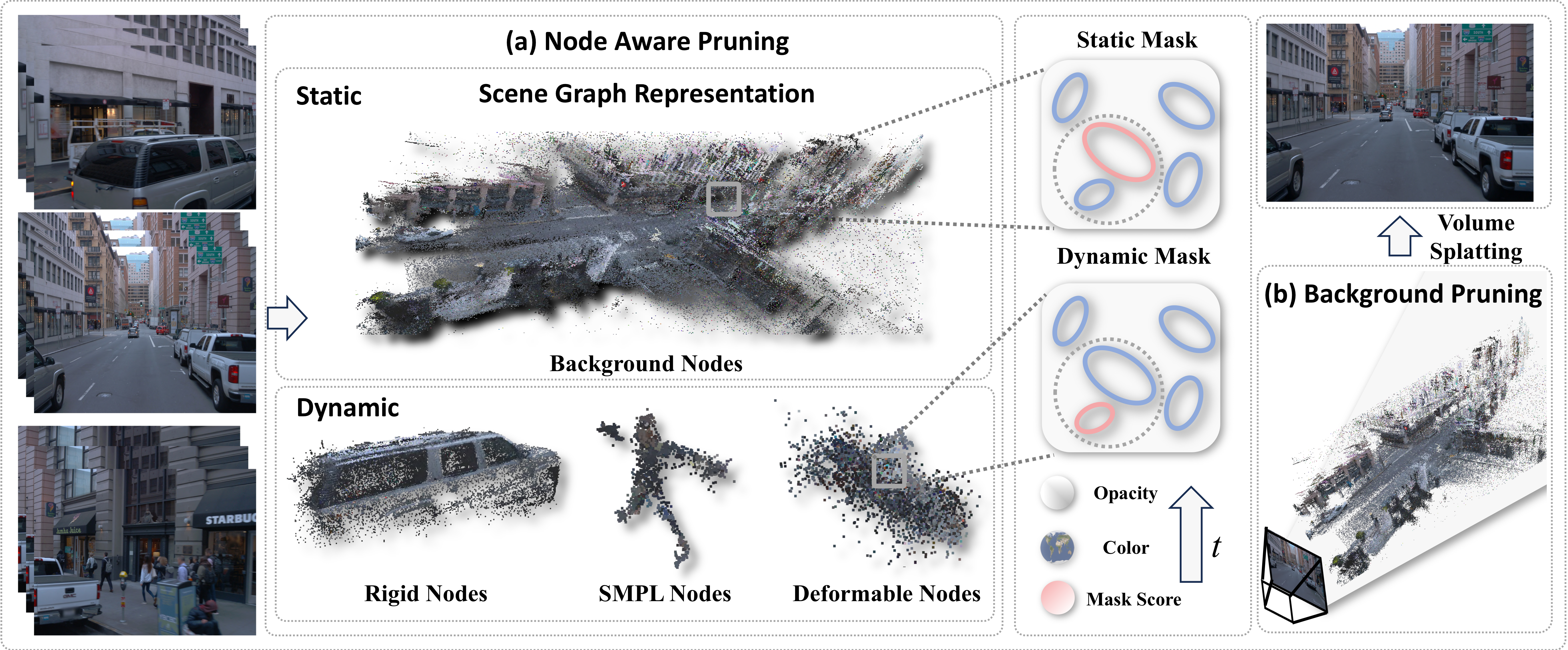

SparseStreet Framework. Our two-stage compression pipeline: (1) Node-aware pruning — each Gaussian primitive is enhanced with a learnable masking score, and a node-aware regularization strategy applies different pruning strengths to foreground dynamic nodes (vehicles, pedestrians) versus static background nodes. (2) Background compression — after the scene stabilizes, global importance metrics combining blending weights and projected areas are used to further remove redundant background Gaussians.

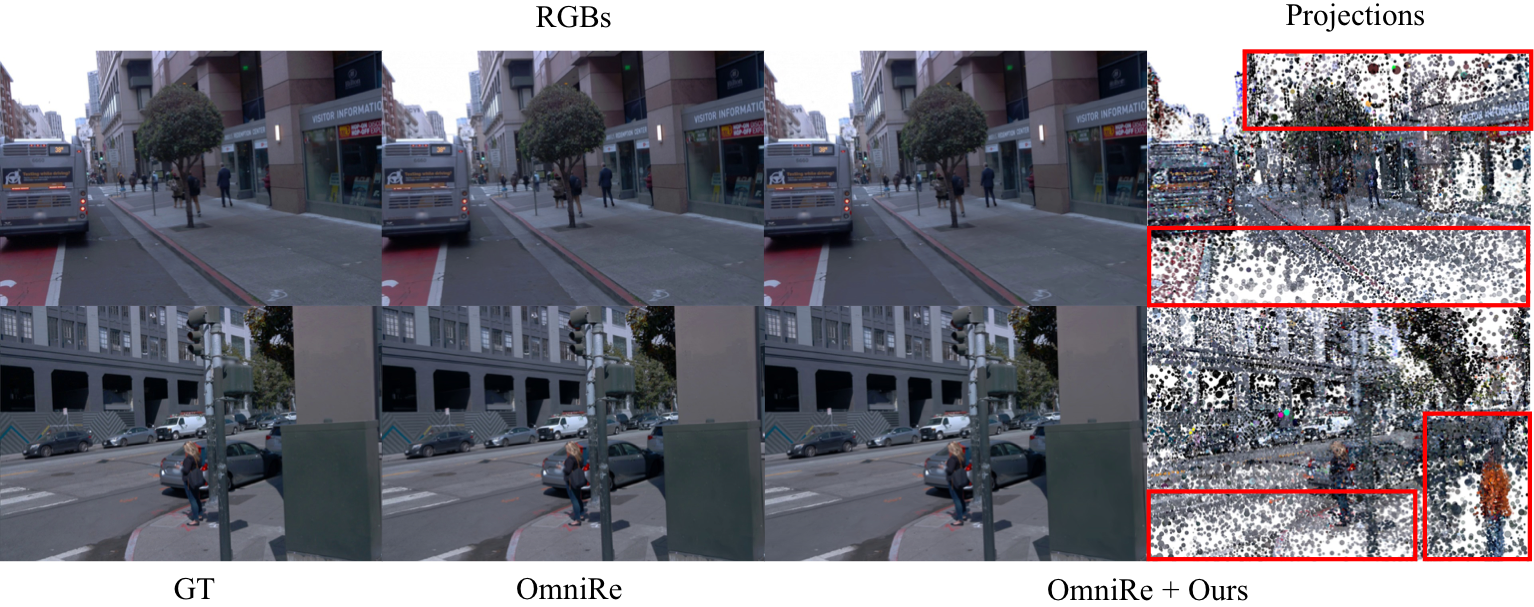

Qualitative comparisons of Ground Truth (GT), OmniRe, and OmniRe + Ours. The rightmost column shows Gaussian projections of our method. Red boxes highlight that static elements (ground plane, buildings) are effectively represented with fewer Gaussians, while dynamic objects (pedestrians) maintain dense representation for better quality.

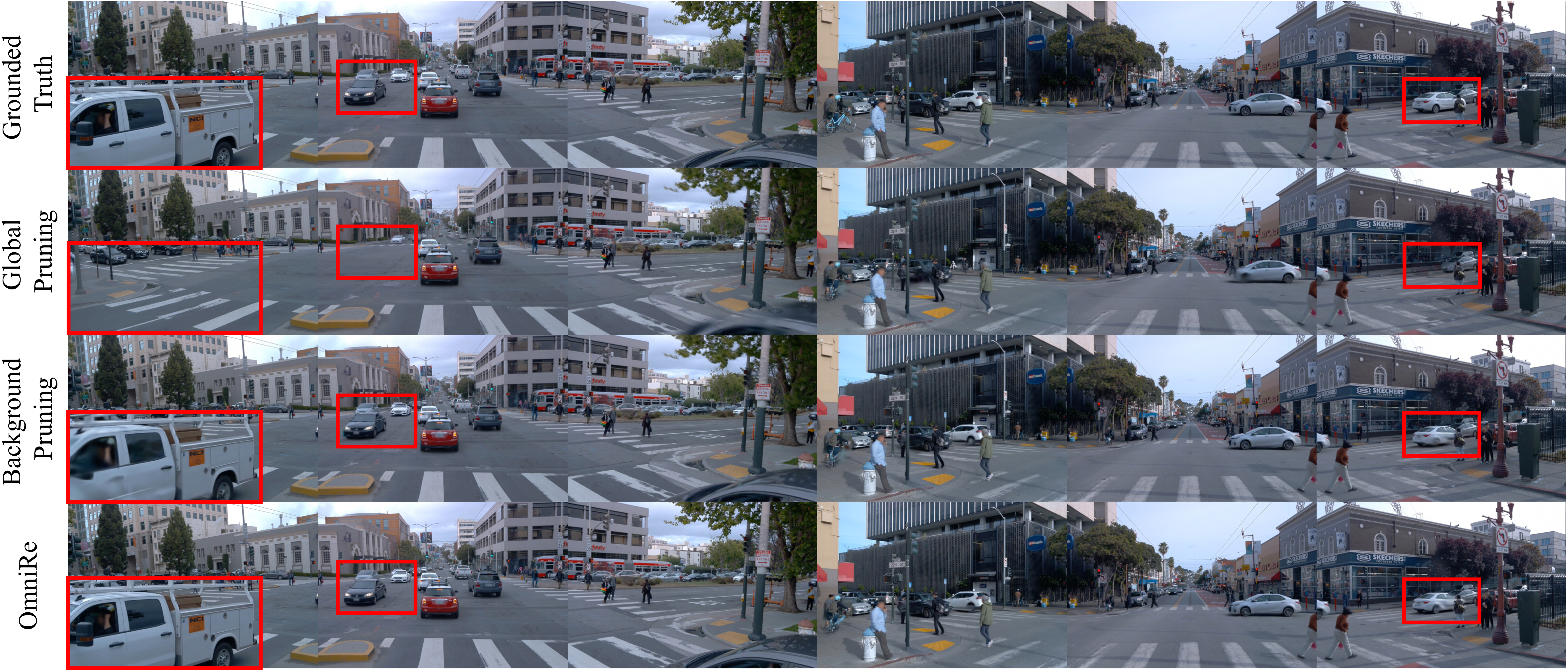

Comparison of pruning strategies on dynamic objects across three camera views. Row 1: Ground truth. Row 2: Global pruning — moving vehicles lose parts due to limited temporal presence. Row 3: Our background pruning — preserves dynamic objects while compressing static elements. Row 4: OmniRe baseline.

| Method | Full Image (Recon) | Human (Recon) | Vehicle (Recon) | Full Image (NVS) | Human (NVS) | Vehicle (NVS) | # Gauss↓ | FPS↑ | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | |||

| EmerNeRF | 31.93 | 0.902 | 22.88 | 0.578 | 24.65 | 0.723 | 29.67 | 0.883 | 20.32 | 0.454 | 22.07 | 0.609 | — | — |

| 3DGS | 26.00 | 0.912 | 16.88 | 0.414 | 16.18 | 0.425 | 25.57 | 0.906 | 16.62 | 0.387 | 16.00 | 0.407 | — | — |

| HUGS | 28.26 | 0.923 | 16.23 | 0.404 | 24.31 | 0.794 | 27.65 | 0.914 | 15.99 | 0.378 | 23.27 | 0.748 | — | — |

| DeformGS | 27.97 | 0.923 | 17.23 | 0.429 | 19.14 | 0.544 | 26.47 | 0.884 | 16.84 | 0.391 | 18.21 | 0.487 | 0.64M | 37.08 |

| PVG | 32.68 | 0.941 | 24.96 | 0.726 | 24.36 | 0.763 | 28.73 | 0.881 | 21.95 | 0.565 | 21.43 | 0.617 | 1.51M | 9.13 |

| StreetGS | 28.73 | 0.932 | 16.54 | 0.401 | 26.46 | 0.848 | 27.02 | 0.887 | 16.27 | 0.368 | 23.99 | 0.761 | 0.87M | 21.60 |

| OmniRe | 34.26 | 0.956 | 26.99 | 0.825 | 27.79 | 0.886 | 29.86 | 0.900 | 23.16 | 0.674 | 24.52 | 0.786 | 1.55M | 46.15 |

| StreetGS + Ours | 28.42 | 0.924 | 16.51 | 0.392 | 26.33 | 0.847 | 27.04 | 0.889 | 16.18 | 0.362 | 23.72 | 0.753 | 0.29M | 57.66 |

| OmniRe + Ours | 34.05 | 0.952 | 26.88 | 0.818 | 27.48 | 0.878 | 29.75 | 0.897 | 23.15 | 0.667 | 24.49 | 0.782 | 0.46M | 80.22 |

| Method | Full Image (Recon) | Human (Recon) | Vehicle (Recon) | Full Image (NVS) | Human (NVS) | Vehicle (NVS) | # Gauss↓ | FPS↑ | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | |||

| DeformGS | 32.31 | 0.924 | 31.76 | 0.900 | 28.18 | 0.864 | 25.01 | 0.728 | 24.02 | 0.570 | 20.96 | 0.574 | 0.43M | 278.80 |

| StreetGS | 32.06 | 0.928 | 31.42 | 0.901 | 29.68 | 0.915 | 24.29 | 0.698 | 23.42 | 0.546 | 21.05 | 0.557 | 0.72M | 117.80 |

| OmniRe | 32.14 | 0.929 | 32.14 | 0.917 | 29.72 | 0.916 | 24.24 | 0.696 | 23.38 | 0.551 | 21.01 | 0.555 | 0.73M | 132.56 |

| StreetGS + Ours | 31.08 | 0.913 | 30.08 | 0.868 | 28.56 | 0.897 | 24.06 | 0.698 | 23.21 | 0.544 | 20.90 | 0.558 | 0.26M | 461.17 |

| OmniRe + Ours | 31.37 | 0.918 | 31.16 | 0.900 | 28.80 | 0.902 | 24.05 | 0.696 | 23.28 | 0.549 | 20.96 | 0.551 | 0.38M | 435.85 |

M = million Gaussian primitives. Recon = Scene Reconstruction. NVS = Novel View Synthesis.

@inproceedings{wuwu2026sparsestreet,

title = {SparseStreet: Sparse Gaussian Splatting for Real-Time Street Scene Simulation},

author = {Wuwu, Qingpo and Wei, Xiaobao and Chen, Peng and Huang, Nan and Zhao, Zhongyu and Wang, Hao and Lu, Ming and Ma, Ningning and Zhang, Shanghang},

booktitle = {Proceedings of the International Conference on Multimedia Retrieval (ICMR)},

year = {2026},

}